In the post “What is the nature of mathematics“, the dilemma of whether mathematics is discovered or invented by humans has been exposed, but so far no convincing evidence has been provided in either direction.

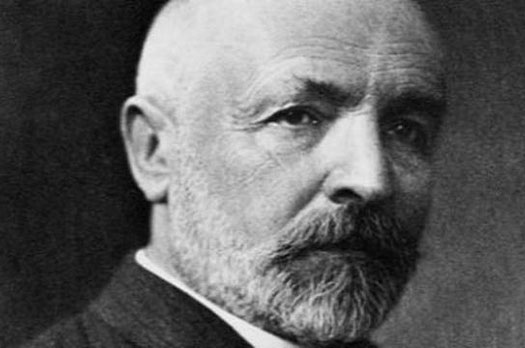

A more profound way of approaching the issue is as posed by Eugene P. Wigner [1], asking about the unreasonable effectiveness of mathematics in the natural sciences.

According to Roger Penrose this poses three mysteries [2] [3], identifying three distinct “worlds”: the world of our conscious perception, the physical world and the Platonic world of mathematical forms. Thus:

- The world of physical reality seems to obey laws that actually reside in the world of mathematical forms.

- The perceiving minds themselves – the realm of our conscious perception – have managed to emerge from the physical world.

- Those same minds have been able to access the mathematical world by discovering, or creating, and articulating a capital of mathematical forms and concepts.

The effectiveness of mathematics has two different aspects. An active one in which physicists develop mathematical models that allow them to accurately describe the behavior of physical phenomena, but also to make predictions about them, which is a striking fact.

Even more extraordinary, however, is the passive aspect of mathematics, such that the concepts that mathematicians explore in an abstract way end up being the solutions to problems firmly rooted in physical reality.

But this view of mathematics has detractors especially outside the field of physics, in areas where mathematics does not seem to have this behavior. Thus, the neurobiologist Jean-Pierre Changeux notes [4], “Asserting the physical reality of mathematical objects on the same level as the natural phenomena studied in biology raises, in my opinion, a considerable epistemological problem. How can an internal physical state of our brain represent another physical state external to it?”

Obviously, it seems that analyzing the problem using case studies from different areas of knowledge does not allow us to establish formal arguments to reach a conclusion about the nature of mathematics. For this reason, an abstract method must be sought to overcome these difficulties. In this sense, Information Theory (IT) [5], Algorithmic Information Theory (AIT) [6] and Theory of Computation (TC) [7] can be tools of analysis that help to solve the problem.

What do we understand by mathematics?

The question may seem obvious, but mathematics is structured in multiple areas: algebra, logic, calculus, etc., and the truth is that when we refer to the success of mathematics in the field of physics, it underlies the idea of physical theories supported by mathematical models: quantum physics, electromagnetism, general relativity, etc.

However, when these mathematical models are applied in other areas they do not seem to have the same effectiveness, for example in biology, sociology or finance, which seems to contradict the experience in the field of physics.

For this reason, a fundamental question is to analyze how these models work and what are the causes that hinder their application outside the field of physics. To do this, let us imagine any of the successful models of physics, such as the theory of gravitation, electromagnetism, quantum physics or general relativity. These models are based on a set of equations defined in mathematical language, which determine the laws that control the described phenomenon, which admit analytical solutions that describe the dynamics of the system. Thus, for example, a body subjected to a central attractive force describes a trajectory defined by a conic.

This functionality is a powerful analysis tool, since it allows to analyze systems under hypothetical conditions and to reach conclusions that can be later verified experimentally. But beware! This success scenario masks a reality that often goes unnoticed, since generally the scenarios in which the model admits an analytical solution are very limited. Thus, the gravitational model does not admit an analytical solution when the number of bodies is n>=3 [8], except in very specific cases such as the so-called Lagrange points. Moreover, the system has a very sensitive behavior to the initial conditions, so that small variations in these conditions can produce large deviations in the long term.

This is a fundamental characteristic of nonlinear systems and, although the system is governed by deterministic laws, its behavior is chaotic. Without going into details that are beyond the scope of this analysis, this is the general behavior of the cosmos and everything that happens in it.

One case that can be considered extraordinary is the quantum model which, according to the Schrödinger equation or the Heisenberg matrix model, is a linear and reversible model. However, the information that emerges from quantum reality is stochastic in nature.

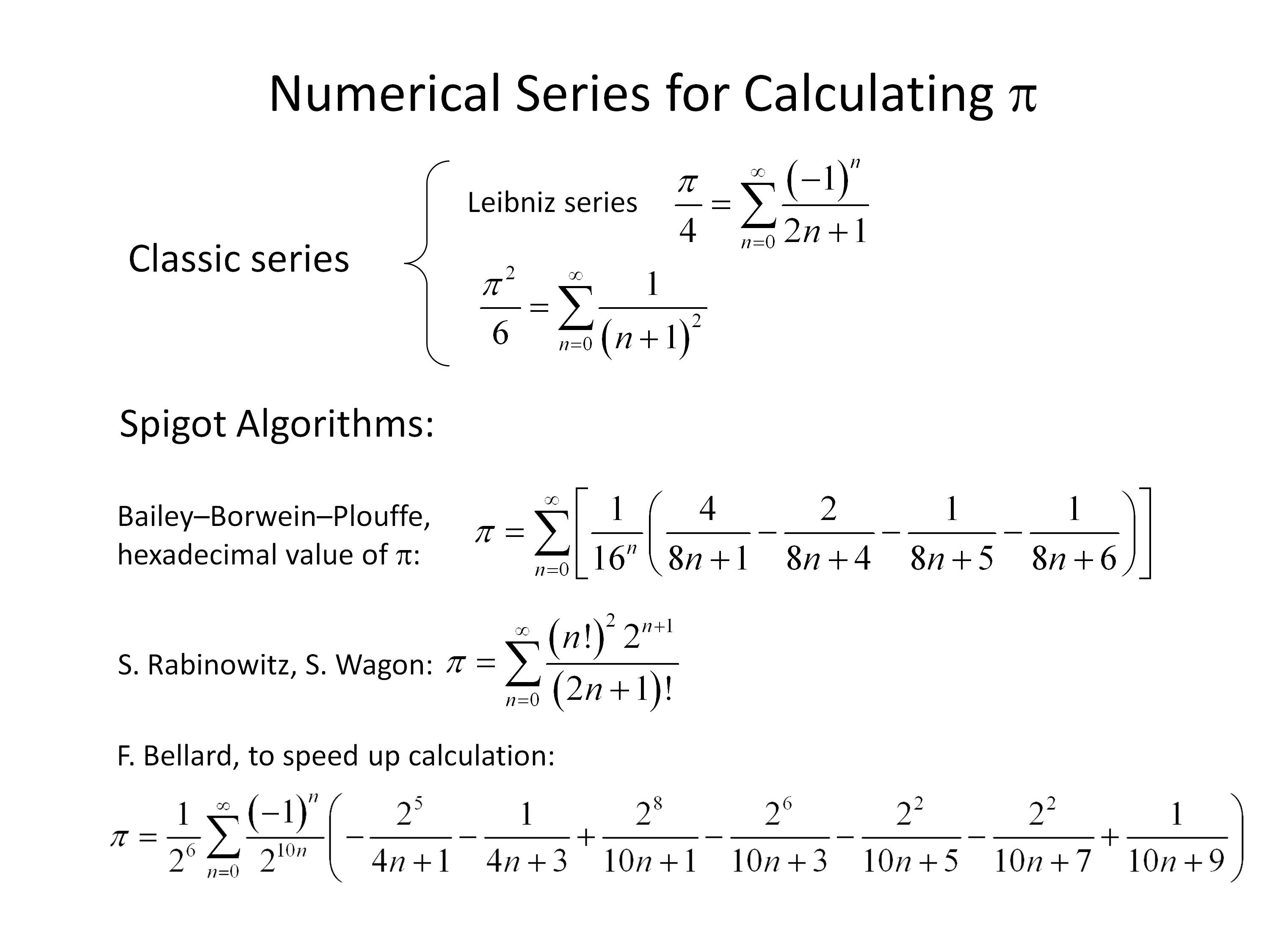

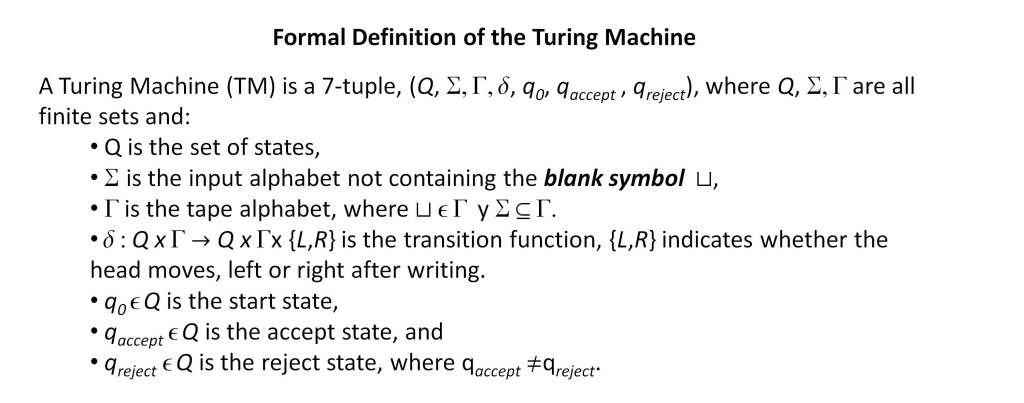

In short, the models that describe physical reality only have an analytical solution in very particular cases. For complex scenarios, particular solutions to the problem can be obtained by numerical series, but the general solution of any mathematical proposition is obtained by the Turing Machine (TM) [9].

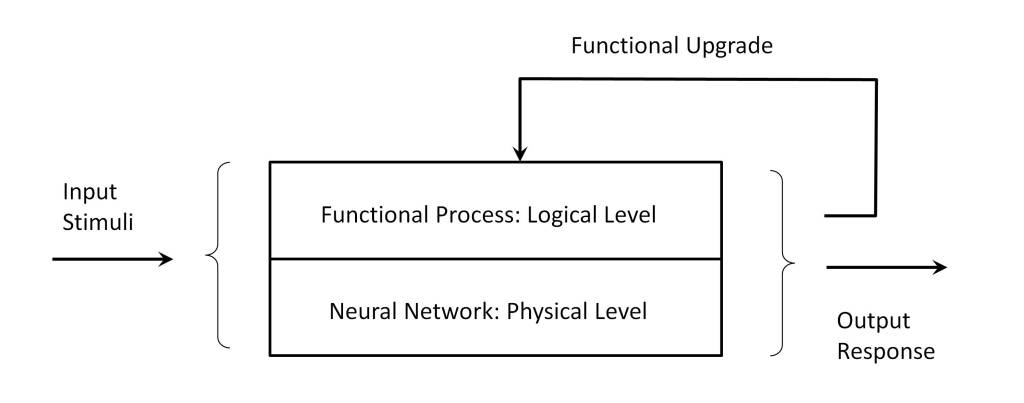

This model can be represented in an abstract form by the concatenation of three mathematical objects〈xyz〉(bit sequences) which, when executed in a Turing machine TM(〈xyz〉), determine the solution. Thus, for example, in the case of electromagnetism, the object z will correspond to the description of the boundary conditions of the system, y to the definition of Maxwell’s equations and x to the formal definition of the mathematical calculus. TM is the Turing machine defined by a finite set of states. Therefore, the problem is reduced to the treatment of a set of bits〈xyz〉 according to axiomatic rules defined in TM, and that in the optimal case can be reduced to a machine with three states (plus the HALT state) and two symbols (bit).

Nature as a Turing machine

And here we return to the starting point. How is it possible that reality can be represented by a set of bits and a small number of axiomatic rules?

Prior to the development of IT, the concept of information had no formal meaning, as evidenced by its classic dictionary definition. In fact, until communication technologies began to develop, words such as “send” referred exclusively to material objects.

However, everything that happens in the universe is interaction and transfer, and in the case of humans the most elaborate medium for this interaction is natural language, which we consider to be the most important milestone on which cultural development is based. It is perhaps for this reason that in the debate about whether mathematics is invented or discovered, natural language is used as an argument.

But TC shows that natural language is not formal, not being defined on axiomatic grounds, so that arguments based on it may be of questionable validity. And it is here that IT and TC provide a broad view on the problem posed.

In a physical system each of the component particles has physical properties and a state, in such a way that when it interacts with the environment it modifies its state according to its properties, its state and the external physical interaction. This interaction process is reciprocal and as a consequence of the whole set of interactions the system develops a temporal dynamics.

Thus, for example, the dynamics of a particle is determined by the curvature of space-time which indicates to the particle how it should move and this in turn interacts with space-time, modifying its curvature.

In short, a system has a description that is distributed in each of the parts that make up the system. Thus, the system could be described in several different ways:

- As a set of TMs interacting with each other.

- As a TM describing the total system.

- As a TM partially describing the global behavior, showing emergent properties of the system.

The fundamental conclusion is that the system is a Turing machine. Therefore, the question is not whether the mathematics is discovered or invented or to ask ourselves how it is possible for mathematics to be so effective in describing the system. The question is how it is possible for an intelligent entity – natural or artificial – to reach this conclusion and even to be able to deduce the axiomatic laws that control the system.

The justification must be based on the fact that it is nature that imposes the functionality and not the intelligent entities that are part of nature. Nature is capable of developing any computable functionality, so that among other functionalities, learning and recognition of behavioral patterns is a basic functionality of nature. In this way, nature develops a complex dynamic from which physical behavior, biology, living beings, and intelligent entities emerge.

As a consequence, nature has created structures that are able to identify its own patterns of behavior, such as physical laws, and ultimately identify nature as a Universal Turing Machine (UTM). This is what makes physical interaction consistent at all levels. Thus, in the above case of the ability of living beings to establish a spatio-temporal map, this allows them to interact with the environment; otherwise their existence would not be possible. Obviously this map corresponds to a Euclidean space, but if the living being in question were able to move at speeds close to light, the map learned would correspond to the one described by relativity.

A view beyond physics

While TC, IT and AIT are the theoretical support that allows sustaining this view of nature, advances in computer technology and AI are a source of inspiration, showing how reality can be described as a structured sequence of bits. This in turn enables functions such as pattern extraction and recognition, complexity determination and machine learning.

Despite this, fundamental questions remain to be answered, in particular what happens in those cases where mathematics does not seem to have the same success as in the case of physics, such as biology, economics or sociology.

Many of the arguments used against the previous view are based on the fact that the description of reality in mathematical terms, or rather, in terms of computational concepts does not seem to fit, or at least not precisely, in areas of knowledge beyond physics. However, it is necessary to recognize that very significant advances have been made in areas such as biology and economics.

Thus, knowledge of biology shows that the chemistry of life is structured in several overlapping languages:

- The language of nucleic acids, consisting of an alphabet of 4 symbols that encodes the structure of DNA and RNA.

- The amino acid language, consisting of an alphabet of 64 symbols that encodes proteins. The transcription process for protein synthesis is carried out by means of a concordance between both languages.

- The language of the intergenic regions of the genome. Their functionality is still to be clarified, but everything seems to indicate that they are responsible for the control of protein production in different parts of the body, through the activation of molecular switches.

On the other hand, protein structure prediction by deep learning techniques is a solid evidence that associates biology to TC [10]. To emphasize also that biology as an information process must verify the laws of logic, in particular the recursion theorem [11], so DNA replication must be performed at least in two phases by independent processes.

In the case of economics there have been relevant advances since the 80’s of the twentieth century, with the development of computational finance [12]. But as a paradigmatic example we will focus on the financial markets, which should serve to test in an environment far from physics the hypothesis that nature has the behavior of a Turing machine.

Basically, financial markets are a space, which can be physical or virtual, through which financial assets are exchanged between economic agents and in which the prices of such assets are defined.

A financial market is governed by the law of supply and demand. In other words, when an economic agent wants something at a certain price, he can only buy it at that price if there is another agent willing to sell him that something at that price.

Traditionally, economic agents were individuals but, with the development of complex computer applications, these applications now also act as economic agents, both supervised and unsupervised, giving rise to different types of investment strategies.

This system can be modeled by a Turing machine that emulates all the economic agents involved, or as a set of Turing machines interacting with each other, each of which emulates an economic agent.

The definition of this model requires implementing the axiomatic rules of the market, as well as the functionality of each of the economic agents, which allow them to determine the purchase or sale prices at which they are willing to negotiate. This is where the problem lies, since this depends on very diverse and complex factors, such as the availability of information on the securities traded, the agent’s psychology and many other factors such as contingencies or speculative strategies.

In brief, this makes emulation of the system impossible in practice. It should be noted, however, that brokers and automated applications can gain a competitive advantage by identifying global patterns, or even by insider trading, although this practice is punishable by law in suitably regulated markets.

The question that can be raised is whether this impossibility of precise emulation invalidates the hypothesis put forward. If we return to the case study of Newtonian gravitation, determined by the central attractive force, it can be observed that, although functionally different, it shares a fundamental characteristic that makes emulation of the system impossible in practice and that is present in all scenarios.

If we intend to emulate the case of the solar system we must determine the position, velocity and angular momentum of all celestial bodies involved, sun, planets, dwarf planets, planetoids, satellites, as well as the rest of the bodies located in the system, such as the asteroid belt, the Kuiper belt and the Oort cloud, as well as the dispersed mass and energy. In addition, the shape and structure of solid, liquid and gaseous bodies must be determined. It will also be necessary to consider the effects of collisions that modify the structure of the resulting bodies. Finally, it will be necessary to consider physicochemical activity, such as geological, biological and radiation phenomena, since they modify the structure and dynamics of the bodies and are subject to quantum phenomena, which is another source of uncertainty. And yet the model is not adequate, since it is necessary to apply a relativistic model.

This makes accurate emulation impossible in practice, as demonstrated by the continuous corrections in the ephemerides of GPS satellites, or the adjustments of space travel trajectories, where the journey to Pluto by NASA’s New Horizons spacecraft is a paradigmatic case.

Conclusions

From the previous analysis it can be hypothesized that the universe is an axiomatic system governed by laws that determine a dynamic that is a consequence of the interaction and transference of the entities that compose it.

As a consequence of the interaction and transfer phenomena, the system itself can partially and approximately emulate its own behavior, which gives rise to learning processes and finally gives rise to life and intelligence. This makes it possible for living beings to interact in a complex way with the environment and for intelligent entities to observe reality and establish models of this reality.

This gave rise to abstract representations such as natural language and mathematics. With the development of IT [5] it is concluded that all objects can be represented by a set of bits, which can be processed by axiomatic rules [7] and which optimally encoded determine the complexity of the object, defined as Kolmogorov complexity [6].

The development of TC establishes that these models can be defined as a TM, so that in the limit it can be hypothesized that the universe is equivalent to a Turing machine and that the limits of reality can go beyond the universe itself, in what is defined as multiverse and that it would be equivalent to a UTM. Esta concordancia entre un universo y una TM permite plantear la hipótesis de que el universo no es más que información procesada por reglas axiomáticas.

Therefore, from the observation of natural phenomena we can extract the laws of behavior that constitute the abstract models (axioms), as well as the information necessary to describe the cases of reality (information). Since this representation is made on a physical reality, its representation will always be approximate, so that only the universe can emulate itself. Since the universe is consistent, models only corroborate this fact. But reciprocally, the equivalence between the universe and a TM implies that the deductions made from consistent models must be satisfied by reality.

However, everything seems to indicate that this way of perceiving reality is distorted by the senses, since at the level of classical reality what we observe are the consequences of the processes that occur at this functional level, appearing concepts such as mass, energy, inertia.

But when we explore the layers that support classical reality, this perception disappears, since our senses do not have the direct capability for its observation, in such a way that what emerges is nothing more than a model of axiomatic rules that process information, and the physical sensory conception disappears. This would justify the difficulty to understand the foundations of reality.

It is sometimes speculated that reality may be nothing more than a complex simulation, but this poses a problem, since in such a case a support for its execution would be necessary, implying the existence of an underlying reality necessary to support such a simulation [13].

There are two aspects that have not been dealt with and that are of transcendental importance for the understanding of the universe. The first concerns irreversibility in the layer of classical reality. According to the AIT, the amount of information in a TM remains constant, so the irreversibility of thermodynamic systems is an indication that these systems are open, since they do not verify this property, an aspect to which physics must provide an answer.

The second is related to the non-cloning theorem. Quantum systems are reversible and, according to the non-cloning theorem, it is not possible to make exact copies of the unknown quantum state of a particle. But according to the recursion theorem, at least two independent processes are necessary to make a copy. This would mean that in the quantum layer it is not possible to have at least two independent processes to copy such a quantum state. An alternative explanation would be that these quantum states have a non-computable complexity.

Finally, it should be noted that the question of whether mathematics was invented or discovered by humans is flawed by an anthropic view of the universe, which considers humans as a central part of it. But it must be concluded that humans are a part of the universe, as are all the entities that make up the universe, particularly mathematics.

References

| [1] | E. P. Wigner, “The unreasonable effectiveness of mathematics in the natural sciences.,” Communications on Pure and Applied Mathematics, vol. 13, no. 1, pp. 1-14, 1960. |

| [2] | R. Penrose, The Emperor’s New Mind: Concerning Computers, Minds, and the Laws of Physics, Oxford: Oxford University Press, 1989. |

| [3] | R. Penrose, The Road to Reality: A Complete Guide to the Laws of the Universe, London: Jonathan Cape, 2004. |

| [4] | J.-P. Changeux and A. Connes, Conversations on Mind, Matter, and Mathematics, Princeton N. J.: Princeton University Press, 1995. |

| [5] | C. E. Shannon, “A Mathematical Theory of Communication,” The Bell System Technical Journal, vol. 27, pp. 379-423, 1948. |

| [6] | P. Günwald and P. Vitányi, “Shannon Information and Kolmogorov Complexity,” arXiv:cs/0410002v1 [cs:IT], 2008. |

| [7] | M. Sipser, Introduction to the Theory of Computation, Course Technology, 2012. |

| [8] | H. Poincaré, New Methods of Celestial Mechanics, Springer, 1992. |

| [9] | A. M. Turing, “On computable numbers, with an application to the Entscheidungsproblem.,” Proceedings, London Mathematical Society, pp. 230-265, 1936. |

| [10] | A. W. Senior, R. Evans and e. al., “Improved protein structure prediction using potentials from deep learning,” Nature, vol. 577, pp. 706-710, Jan 2020. |

| [11] | S. Kleene, “On Notation for ordinal numbers,” J. Symbolic Logic, no. 3, p. 150–155, 1938. |

| [12] | A. Savine, Modern Computational Finance: AAD and Parallel Simulations, Wiley, 2018. |

| [13] | N. Bostrom, “Are We Living in a Computer Simulation?,” The Philosophical Quarterly, vol. 53, no. 211, p. 243–255, April 2003. |